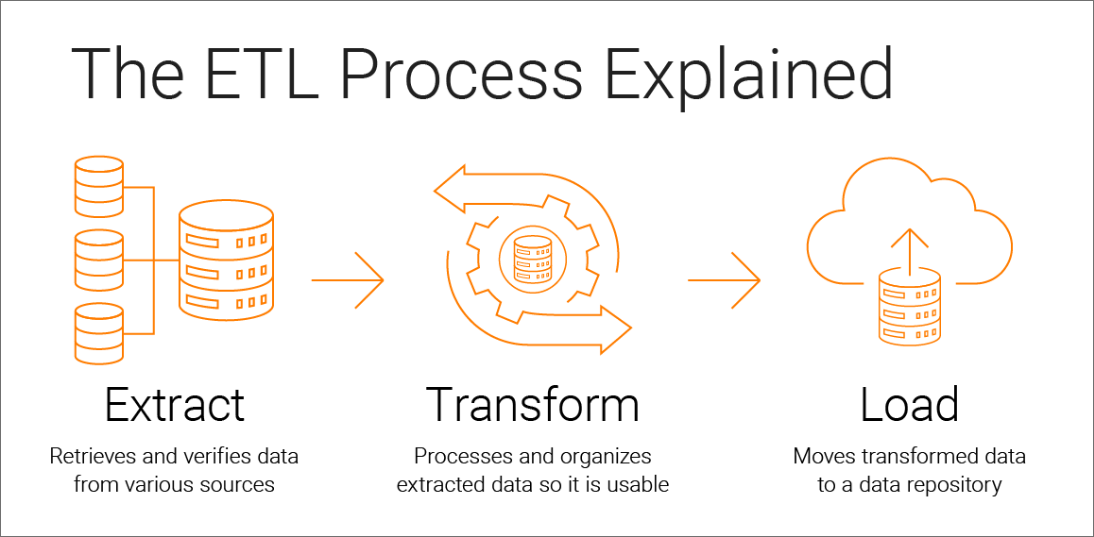

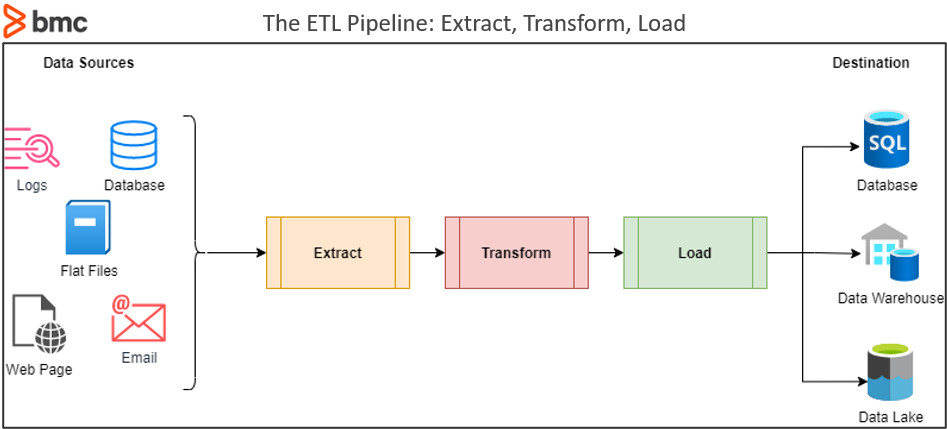

Integration: The integration of ADF and other Azure services such as Azure SQL Data Warehouse, Azure Data Lake Storage, and Azure Blob Storage is seamless.You can schedule and monitor the execution of ETL pipelines, ensuring that data is processed and loaded at the right times. Orchestration: ADF enables the orchestration of complex workflows.You can perform operations like filtering, aggregating, and joining data. Data Transformation: It provides data transformation capabilities using Data Flow, a visual design tool that enables you to build data transformation logic without writing code.You can easily move data from source to target systems. Data Movement: It allows you to connect to various data sources and destinations, both on-premises and in the cloud.Here’s how ADF facilitates the creation of ETL pipelines: With ADF at their disposal, organizations can effortlessly procure data from a multitude of sources, apply potent data transformation activities, and channel the processed data into their desired destinations, including data lakes, databases, and advanced analytics platforms. It serves as a fully managed, scalable platform tailored for the purpose of orchestrating and automating data movement and transformation workflows. It offers an extensive toolkit and a plethora of capabilities for crafting ETL pipelines, enabling the extraction of data from diverse sources, executing robust data transformation operations, and seamlessly loading the refined data into target systems or data warehouses.ĪDF, hails from the Microsoft Azure ecosystem, represents a cloud-native data integration service. Now that we have a deeper understanding of the ETL process, the next question that naturally arises is: What tool can be employed to configure and execute this intricate process?Īzure Data Factory stands as a comprehensive, cloud-based solution designed to empower organizations in the orchestration and automation of data integration workflows on a large scale. ETL pipelines have become crucial in modern data architecture as they enable organizations to keep their data up-to-date, clean, and readily available for analysis. These pipelines ensure that data is extracted, transformed, and loaded efficiently and reliably. Step organizes the data in a format that’s optimized for querying and analytics.ĮTL pipelines are a series of data processing steps that automate the ETL process. Load: After data transformation, it is loaded into a target data store, typically a data warehouse or a data lake.This phase ensures data quality and consistency. Data transformations may involve cleaning, aggregating, joining, and even imputing missing values. Transform: Once the data is collected, it often needs to be cleaned, enriched, and transformed to make it suitable for analysis.Primary objective revolves around amalgamating data from diverse origins into a centralized depository. These origins may assume structured formats, such as relational databases, or exhibit unstructured characteristics, for instance, text files. Extract: In the first phase, data is extracted from various sources, such as databases, logs, APIs, and files.It involves a series of pivotal stages that collaboratively shape raw data into valuable insights.Īllow us to delve deeper into the intricacies of each stage within the ETL process. The ETL process forms the bedrock of data integration, guaranteeing that data remains accurate, uniform, and readily usable across an array of systems and applications.

This meticulous process is instrumental in ensuring that data is meticulously prepared for subsequent analysis, reporting, or other downstream operations. So, what exactly is ETL?īefore delving into Azure Data Factory, let’s take a moment to revisit the fundamental principles of ETL pipeline.ĮTL stands for Extract, Transform, Load, and it encompasses a three-step approach: extracting data from diverse sources, transforming it into a standardized format, and finally, loading it into a designated target location. To understand ETL Pipelines, we need to begin first by understanding ETL. Within this blog, we shall investigate the basics of ETL and probe into the ways Azure Data Factory simplifies the inception of ETL pipelines. To accomplish this, entities frequently employ ETL (Extract, Transform, Load) methods and solutions such as Azure Data Factory (ADF) to establish, administer, and mechanize data pipelines. In the current data-centric environment, companies depend on effective handling and scrutiny of extensive data volumes to render educated choices. Are you intrigued by the concealed ETL pipeline procedures that transform unprocessed data into practical insights for entities?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed